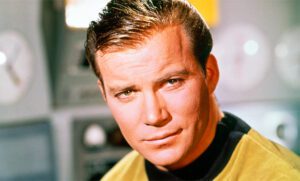

William Shatner, as Captain James T. Kirk,  once stated that “risk is our business”. While he was referring to the mission of the starship Enterprise, it holds true for many disciplines and endeavors, including information security. For without risk there is no reward, but too much risk without commensurate benefits also is not a proper direction. There must be balance.

once stated that “risk is our business”. While he was referring to the mission of the starship Enterprise, it holds true for many disciplines and endeavors, including information security. For without risk there is no reward, but too much risk without commensurate benefits also is not a proper direction. There must be balance.

Recently the SEC announced a complaint against SolarWinds and its CISO involving misleading statements on the company’s information security posture. I would encourage all to read the complaint and not simply media summaries to get a complete picture of the nature of this action. This is a complaint, not a judgement, and all will have the opportunity to present their side of the story. Nor is this post an indictment one way or another. However, within is a possible teachable moment, and hence the reason for this post.

I often will say that the CISO’s, and by extension the vCISO’s, primary responsibility is to convey enough threat and vulnerability information, including recommendations, to the C suite and the Board of Directors so they may make risk-informed decisions. That’s a simple yet loaded statement; it requires many skills, including technical savvy, business acumen, and effective communication. Unfortunately, not all CISOs or vCISOs possess these necessary traits.

Generally, as I understand it, the SolarWinds information security department were aware of deficiencies in the company’s security posture, but public statements to investors did not reflect such concerns. What happened between the concerns and the statements? To me, in the beginning it sounds strangely reminiscent of the Challenger tragedy in 1986. Morton Thiokol engineers vehemently cautioned against launching in the cold conditions and management overrode the decision to keep with external expectations. The result was a rapid, uncontrolled disassembly of the vehicle (it did not explode per se, but I digress). All seven astronauts died, and the shuttle program never came back completely from it.

What happened? Did the launch decision makers know of the risks and ignored it? Or did the engineers not effectively communicate the risks? The Rogers Commission Report concluded that concerns were not adequately communicated or considered by high-level NASA management, that there was pressure to maintain operations, and that there was an over-resilience on past successes as safety assurances. We can apply some of those lessons to information security.

I imagine SolarWinds had pressure to maintain operations and that they also may have relied on past successes to justify downplaying the probability and/or impact of their identified vulnerabilities being exploited; these would seem to be common elements in any risk situation. In flying, private pilots often refer to “get-home-itis”, a condition where the overshadowing desire to end the trip produces pressure, conscious or not, to continue to accept a steadily increasing risk environment until one link in the chain fails. A pilot who pushed the edge of their fuel tanks for one trip may feel compelled to do so again, despite a slightly greater headwind, because they’ve made it before. Often the result is fuel depravation and an unplanned contact with land (sometimes with fatalities).

But what was the last link that failed for SolarWinds? Was it a failure to communicate risk properly, a failure to understand risk, or callous disregard for risk? What I have not seen yet in the SolarWinds reporting is how the risks were communicated to the Board and executive leadership. A simple path for such begins with an information security risk assessment that identifies and prioritizes risks, recommendations for treating risks above high tolerance, and documented acceptance by those who are in authority of the risk treatment. It is the latter which could lend significant clarity to the SolarWinds situation. If the CISO had documented risks and risk acceptance by those in authority, then the CISO would be clear. But who in the organization had authority to accept information security risks, and was such documented?

All of this points to the need for a solid, structured information security risk management (ISRM) program. This is applicable for large, global organizations like SolarWinds as well as small startup businesses. In fact, I would submit that, in some ways, it is more imperative for SMBs to have a solid information security risk management program since they heavily leverage risk as they get their product or service off the ground. Next month I’ll dig into elements of an effective ISRM program, to include governance, assessment, recommendations, and perhaps most importantly, treatment authorizations and documentation, including acceptance.

If you’re an SMB struggling with understanding your information security risk, we can help. Contact info@vcisoservices.com for more information.